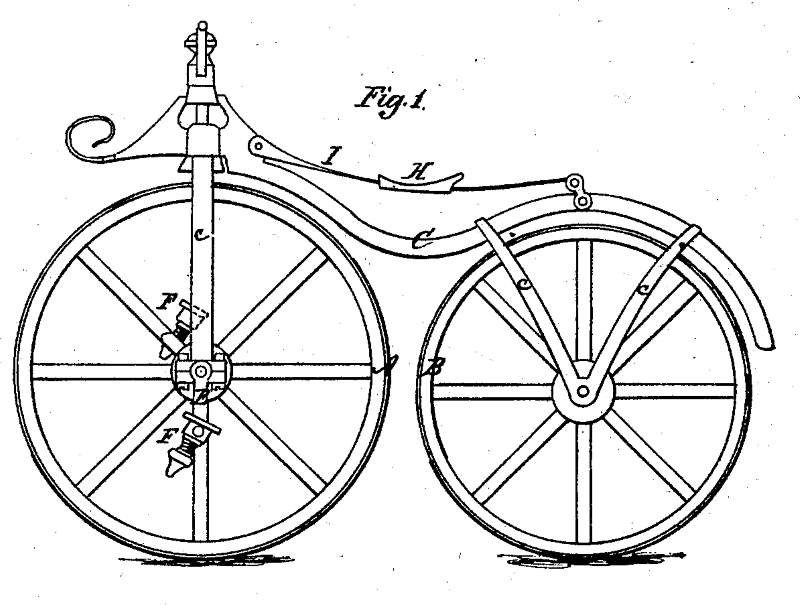

In 1979, Kane Kramer invented a portable digital music player. He sought patents in numerous countries, including the United States where he was granted US Patent No. 4,667,088.

In 1979, Kane Kramer invented a portable digital music player. He sought patents in numerous countries, including the United States where he was granted US Patent No. 4,667,088.

The Kramer portable digital music player used memory cards, the size of a standard credit card, which were each capable of holding 3.5 minutes of music (i.e. one song). A record shop could store blank cards and load those cards on-demand from a digital music data store in the music shop at the time of sale.

A media outlet asserts that Kramer was the “inventor behind the iPod.” That statement probably goes too far in characterizing a reference that Apple made to Kramer’s patent and invention as prior art in a patent lawsuit.

Regardless, Kramer’s device was an early portable digital music player. The problem for Kramer was that his device did not become a commercial success. And later his patents lapsed because he was unable to pay the patent maintenance fees.

While we don’t know why Kramer’s device was not a commercial success, it might be that in 1979 the Internet did not exist that made electronic distribution of music easy–you don’t have to go to the physical music store. It might be that the electronic storage capacity of the memory card for the device could only hold one song. It might be that the elements helpful for commercial success did not exist in the 1980s when Kramer attempted to commercialize the invention.

Maybe Kramer’s music player was not within “the adjacent possible.” Stated another way, maybe it was before its time.

Steven Johnson discusses “the adjacent possible” in his book, Where Good Ideas Come From. The adjacent possible provides an outer boundary to how advanced your invention can be from the current state of the art. It is one factor to consider when evaluating the possible commercial success of your invention.

The Adjacent Possible from Evolutionary Biology

Johnson notes that scientist Stuart Kauffman coined the term “the adjacent possible” to describe the set of first-order combinations of molecules that were possible given the composition of the earth’s environment before life emerged:

The lifeless earth was dominated by a handful of basic molecules: ammonia, methane, water, carbon dioxide, a smattering of amino acids and other simple organic compounds… Think of all those initial molecules, and then imagine all the potential new combinations that they could form spontaneously… trigger all those combinations [and] you would end up with most of the building blocks of life: the proteins that form the boundaries of cells; sugar molecules crucial to the nucleic acids of our DNA.

…

But you would not be able to trigger chemical reactions that would build a mosquito, or a sunflower, or a human brain… The atomic elements that make up a sunflower are the very same ones available on earth before the emergency of life, but you can’t spontaneously create a sunflower in that environment, because it relies on a whole series of subsequent innovations that wouldn’t evolve on earth for billions of years: chloroplasts to capture the sun’s energy, vascular tissues to circulate resources though the plant, DNA molecules to pass on sunflower building instructions to the next generation.”

On the pre-life earth formaldehyde was within the adjacent possible but more complex organisms were not. The more complex organisms required intermediate building blocks that had not yet come into existence.

The Difference Engine and The Analytical Engine

The adjacent possible is not only applicable within evolutionary biology, but applies to human-made inventions.

Johnson notes two inventions of Charles Babbage–the Difference Engine and the Analytical Engine–to show when an invention is within the adjacent possible and when it is not. Babbage was a nineteenth-century British inventor, now known as the father of modern computing.

The Difference Engine was advanced mechanical calculator described as a very complex “fifteen-ton contraption, with over 25,000 mechanical parts, designed to calculate polynomial functions that were essential to creating the trigonometric tables crucial to navigation.” While Babbage did not build the Difference Engine during his lifetime, the Difference Engine was within the adjacent possible of the Victorian technology. Many improvements occurred within the field mechanical calculation during that time based on Babbage’s architecture, according to Johnson.

On the other hand, Babbage’s Analytical Engine was not within the adjacent possible. On paper, the Analytical Engine was the world’s first programmable computer. But it was so complicated most of it never got past the blueprint stage:

Babbage’s design for the engine computer anticipated the basic structure of all contemporary computers: “programs” were to be inputted via punch cards…; instructions and data were captured in a “store,” the equivalent of what were now call random access memory, or RAM; and calculations were executed via a system that Babbage called “the mill.” uniting industrial-era language to describe what were now call the central processing unit, or CPU.

Babbage had most of the system sketched out by 1837, but the first true computer to use this programmable architecture didn’t appear for more that a hundred years. While the Difference Engine engendered an immediate series of refinements and practical applications, the Analytical Engine effectively disappeared from the map. Many of the pioneering insights that Babbage had hit upon in 1830s had to be independently rediscovered by the visionaries of the World War II-era computer science.

Implementing the Analytical Engine with mechanical gears and switches would have been extremely complex and difficult to maintenance according to Johnson. On top of that it would have been slow. For the Analytical Engine to work or work well, the logic needed to be implemented with electronics and not mechanical gears. Therefore, the Analytical  Engine was not within the adjacent possible in the 1837.

YouTube

Johnson leaves us with one more modern example: Youtube. Johnson notes that if Youtube was created 10 years earlier in 1995 it would have failed. This is because in 1995 most web users were on slow dial-up connections and it could take an hour to download a standard Youtube clip. In 1995, Youtube’s innovation was not within the adjacent possible, but ten years later, with broadband Internet and Adobe’s Flash technology, it was.

Invention Evaluation Factor: Is it Within the Adjacent Possible?

One question you should answer when evaluating your invention is whether the invention is within the adjacent possible.

It is not enough to consider whether it is technically possible to create your invention. The Kramer portable digital music player was technically possible to create at the time. But the underlying infrastructure–the lack of the Internet–and the memory storage capacity available at the time could have constrained its ability to be a commercial success.

At least for human-made inventions, practical application of the adjacent possible principle must consider not only whether it is technically possible to manufacture/make, but whether the infrastructure and other elements helpful for commercial success exist at the time.